Azure Functions

Eliminate Cold Starts by Predicting Invocations of Serverless Functions

Azure Functions introduce a data-driven strategy to pre-warm serverless applications right before the next request comes in

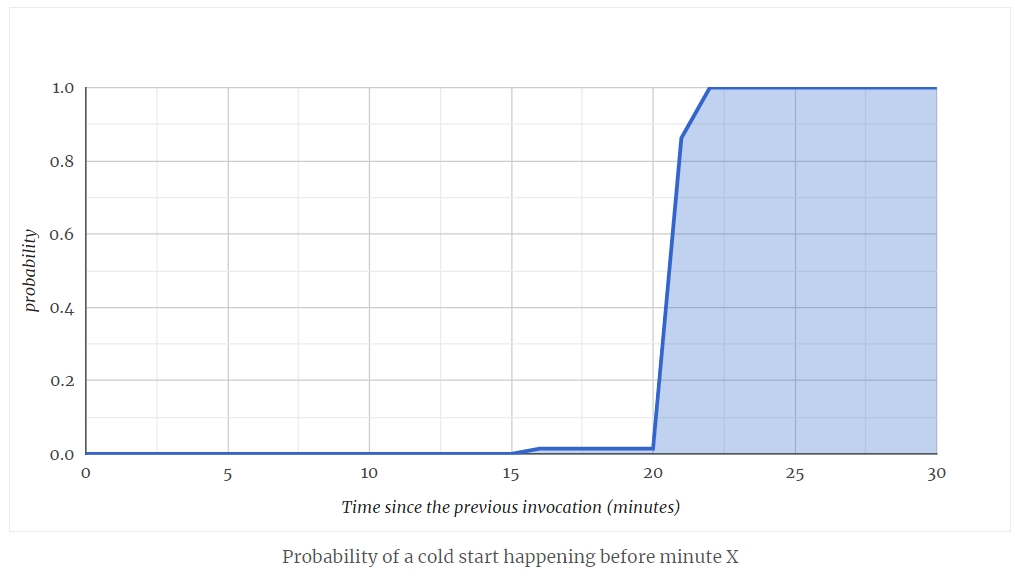

Read more...When Does Cold Start Happen on Azure Functions?

The first cold start happens when the very first request comes in after deployment.

After that request is processed, the instance stays alive for the time being to be reused for subsequent requests. But for how long?

Read more...Comparison of Cold Starts in Serverless Functions across AWS, Azure, and GCP

AWS Lambda, Azure Functions, and Google Cloud Functions compared in terms of cold starts across all supported languages

Read more...Cold Starts in Azure Functions

Influence of dependecies, language, runtime selection on Consumption Plan

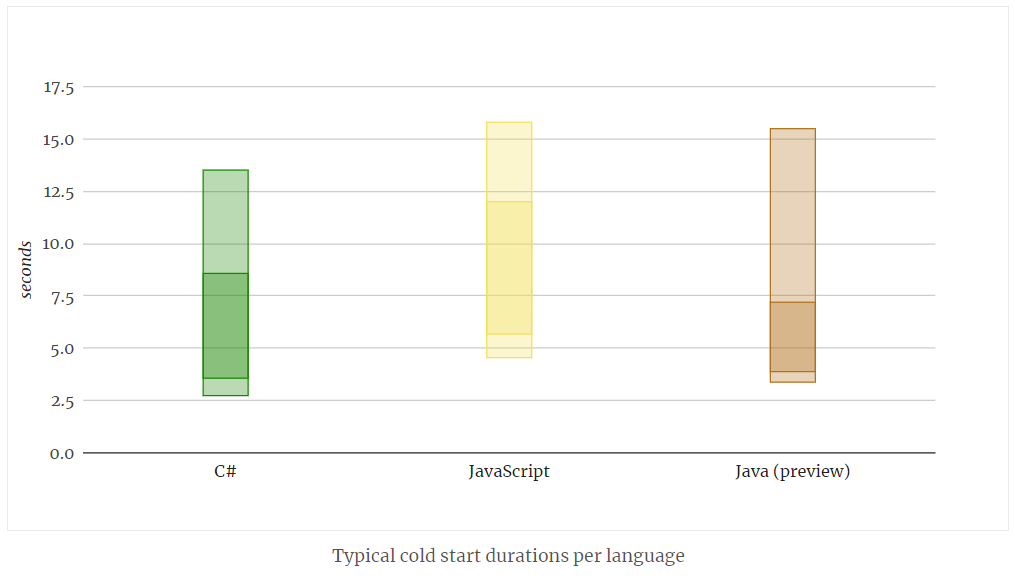

Read more...Azure Functions: Cold Start Duration per Language

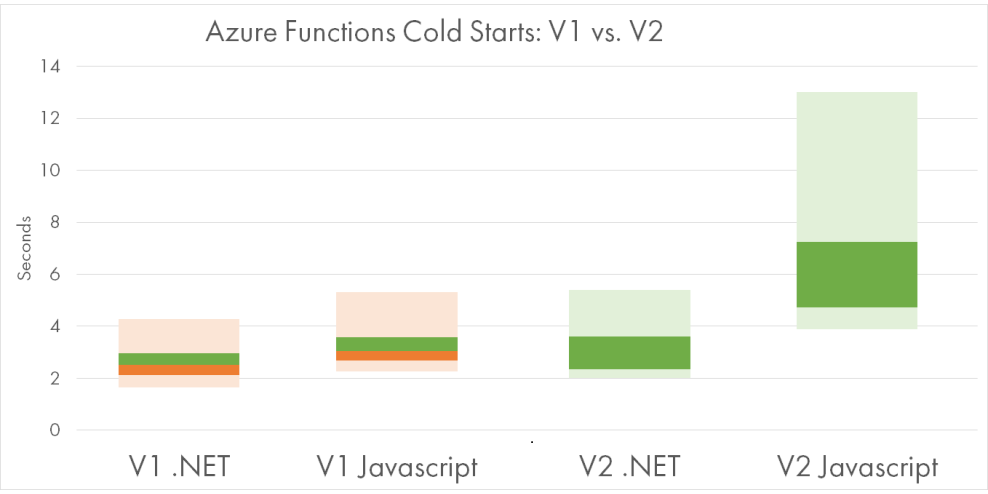

The following chart shows the typical range of cold starts in Azure Functions, broken down per language. The darker ranges are the most common 67% of durations, and lighter ranges include 95%.

Read more...Eliminate Cold Starts by Predicting Invocations of Serverless Functions

Azure Functions introduce a data-driven strategy to pre-warm serverless applications right before the next request comes in

Read more...Serverless in the Wild: Azure Functions Production Usage Statistics

Insightful statistics about the actual production usage of Azure Functions, based on the data from Microsoft's paper

Read more...Hosting Azure Functions in Google Cloud Run

Running Azure Functions Docker container inside Google Cloud Run managed service

Read more...Choosing the Best Deployment Tool for Your Serverless Applications

Factors to consider while deploying cloud infrastructure for serverless apps.

Read more...AWS Lambda vs. Azure Functions: 10 Major Differences

A comparison AWS Lambda with Azure Functions, focusing on their unique features and limitations.

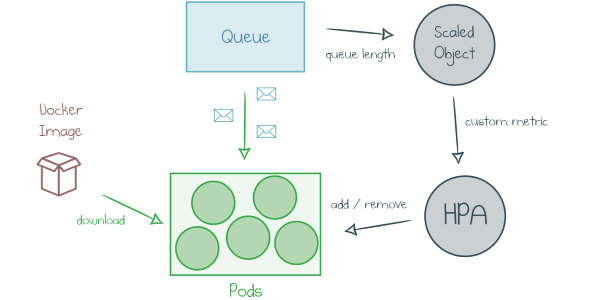

Read more...How To Deploy a Function App with KEDA (Kubernetes-based Event-Driven Autoscaling)

Hosting Azure Functions in Kubernetes: how it works and the simplest way to get started.

Read more...Ten Pearls With Azure Functions in Pulumi

Ten bite-sized code snippets that use Pulumi to build serverless applications with Azure Functions and infrastructure as code.

Read more...How to Measure the Cost of Azure Functions

Azure pricing can be complicated—to get the most value out of your cloud platform, you need to know how to track spend and measure the costs incurred by Azure Functions.

Read more...Load-Testing Azure Functions with Loader.io

Verifying your Function App as a valid target for the cloud load testing.

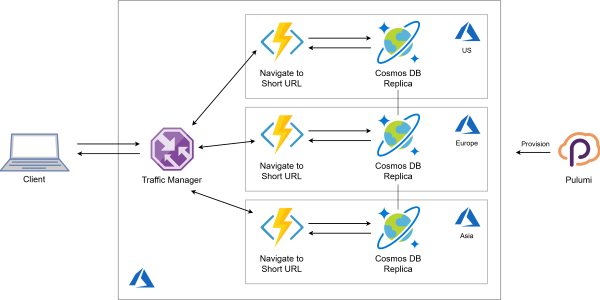

Read more...Globally-distributed Serverless Application in 100 Lines of Code. Infrastructure Included!

Building a serverless application on Azure with both the data store and the HTTP endpoint located close to end users for fast response time.

Read more...Serverless as Simple Callbacks with Pulumi and Azure Functions

The simplest way to take a Node.js function and deploy it to Azure cloud as an HTTP endpoint using Pulumi.

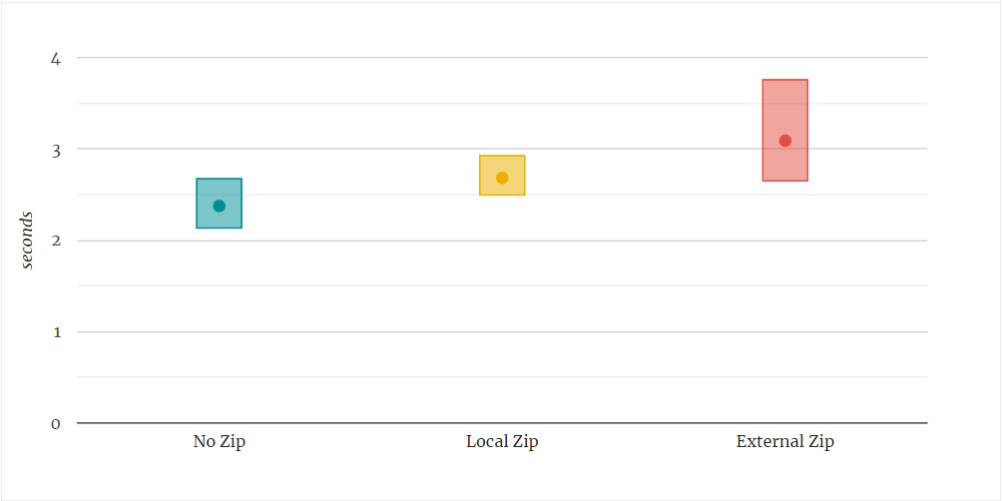

Read more...Reducing Cold Start Duration in Azure Functions

The influence of the deployment method, application insights, and more on Azure Functions cold starts.

Read more...Concurrency and Isolation in Serverless Functions

Exploring approaches to sharing or isolating resources between multiple executions of the same cloud function and the associated trade-offs.

Read more...Serverless at Scale: Serving StackOverflow-like Traffic

Scalability test for HTTP-triggered serverless functions across AWS, Azure and GCP

Read more...A Fairy Tale of F# and Durable Functions

How F# and Azure Durable Functions make children happy (most developers are still kids at heart)

Read more...Making Sense of Azure Durable Functions

Why and How of Stateful Workflows on top of serverless functions

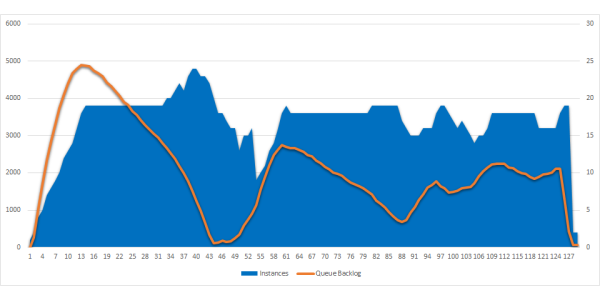

Read more...From 0 to 1000 Instances: How Serverless Providers Scale Queue Processing

Comparison of queue processing scalability for FaaS across AWS, Azure and GCP

Read more...Azure Functions V2 Is Released, How Performant Is It?

Comparison of performance benchmarks for Azure Functions V1 and V2

Read more...Serverless: Cold Start War

Comparison of cold start statistics for FaaS across AWS, Azure and GCP

Read more...Cold Starts Beyond First Request in Azure Functions

Can we avoid cold starts by keeping Functions warm, and will cold starts occur on scale out? Let's try!

Read more...Azure Functions: Cold Starts in Numbers

Auto-provisioning and auto-scalability are the killer features of Function-as-a-Service cloud offerings, and Azure Functions in particular.

One drawback of such dynamic provisioning is a phenomenon called “Cold Start”. Basically, applications that haven’t been used for a while take longer to startup and to handle the first request.

Read more...Awesome F# Exchange 2018

I’m writing this post in the train to London Stensted, on my way back from F# Exchange 2018 conference.

F# Exchange is a yearly conference taking place in London, and 2018 edition was the first one for me personally. I also had an honour to speak there about creating Azure Functions with F#.

Read more...Azure Durable Functions in F#

Azure Functions are designed for stateless, fast-to-execute, simple actions. Typically, they are triggered by an HTTP call or a queue message, then they read something from the storage or database and return the result to the caller or send it to another queue. All within several seconds at most.

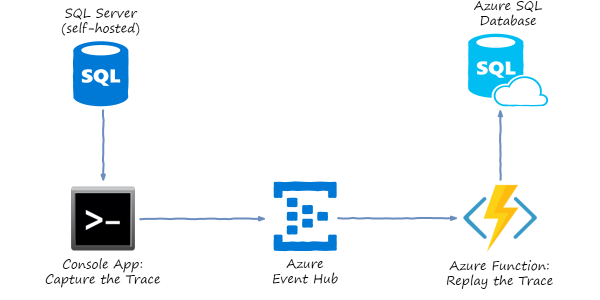

Read more...Load Testing Azure SQL Database by Copying Traffic from Production SQL Server

Azure SQL Database is a managed service that provides low-maintenance SQL Server instances in the cloud. You don’t have to run and update VMs, or even take backups and setup failover clusters. Microsoft will do administration for you, you just pay an hourly fee.

Read more...Tic-Tac-Toe with F#, Azure Functions, HATEOAS and Property-Based Testing

A toy application built with F# and Azure Functions: a simple end-to-end implementation from domain design to property-based tests.

Read more...Azure Functions Get More Scalable and Elastic

Back in August this year, I’ve posted Azure Functions: Are They Really Infinitely Scalable and Elastic? with two experiments about Azure Function App auto scaling. I ran a simple CPU-bound function based on Bcrypt hashing, and measured how well Azure was running my Function under load.

Read more...Precompiled Azure Functions in F#

This post is giving a start to F# Advent Calendar in English 2017. Please follow the calendar for all the great posts to come.

Azure Functions is a “serverless” cloud offering from Microsoft. It allows you to run your custom code as response to events in the cloud. Functions are very easy to start with; and you only pay per execution - with free allowance sufficient for any proof-of-concept, hobby project or even low-usage production loads. And when you need more, Azure will scale your project up automatically.

Read more...Azure F#unctions Talk at FSharping Meetup in Prague

On November 8th 2017 I gave a talk about developing Azure Functions in F# at FSharping meetup in Prague.

I really enjoyed giving this talk: the audience was great and asked awesome questions. One more prove that F# community is so welcoming and energizing!

Read more...Azure Function Triggered by Azure Event Grid

Update: I missed the elephant in the room. There actually exists a specialized

trigger for Event Grid binding. In the portal, just select Experimental

in Scenario drop down while creating the function. In precompiled

functions, reference Microsoft.Azure.WebJobs.Extensions.EventGrid NuGet

package.

Azure Functions: Are They Really Infinitely Scalable and Elastic?

Updated results are available at Azure Functions Get More Scalable and Elastic.

Automatic elastic scaling is a built-in feature of Serverless computing paradigm. One doesn’t have to provision servers anymore, they just need to write code that will be provisioned on as many servers as needed based on the actual load. That’s the theory.

Read more...Authoring a Custom Binding for Azure Functions

The process of creating a custom binding for Azure Functions.

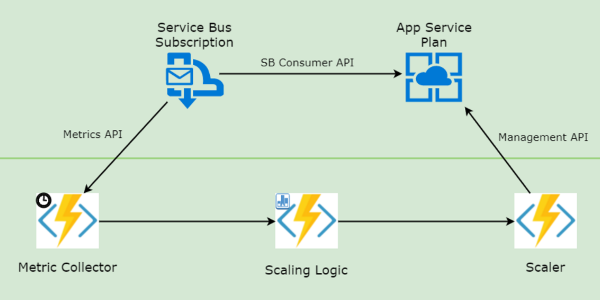

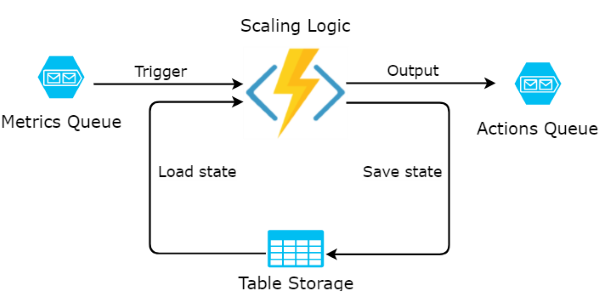

Read more...Custom Autoscaling with Durable Functions

Leverage Azure Durable Functions to scale-out and scale-in App Service based on a custom metric

Read more...Custom Autoscaling of Azure App Service with a Function App

How to scale-out and scale-in App Service based on a custom metric

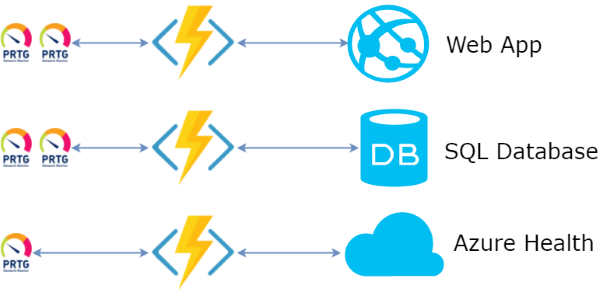

Read more...Azure Functions as a Facade for Azure Monitoring

Azure Functions are the Function-as-a-Service offering from Microsoft Azure cloud. Basically, an Azure Function is a piece of code which gets executed by Azure every time an event of some kind happens. The environment manages deployment, event triggers and scaling for you. This approach is often reffered as Serverless.

Read more...